groq_whisper_stt

A Flutter plugin for real-time speech-to-text using Groq's Whisper API. Captures audio from the device microphone, detects speech via voice activity detection, and streams transcription results.

Features

- Real-time transcription — streams results as you speak

- Voice activity detection — energy-based VAD with adaptive noise floor, only sends audio when speech is detected

- Chunk overlap & deduplication — 500ms overlap between chunks with boundary word dedup to avoid repeated or cut words

- Prompt chaining — feeds previous transcription context to Whisper for better continuity

- Retry with backoff — handles rate limits (429) and server errors (5xx) automatically

- Word timestamps — optional word-level timing from Whisper

- Configurable — model selection, chunk duration, silence timeout, language, and more

Platform Support

| Platform | Minimum Version | Audio Backend |

|---|---|---|

| Android | API 24 (7.0) | AudioRecord with VOICE_RECOGNITION source |

| iOS | 14.0 | AVAudioEngine with AVAudioConverter |

Getting Started

1. Get a Groq API Key

Sign up at console.groq.com and create an API key.

2. Install

Add to your pubspec.yaml:

dependencies:

groq_whisper_stt: ^0.1.0

3. Platform Setup

Android — add to android/app/src/main/AndroidManifest.xml:

<uses-permission android:name="android.permission.RECORD_AUDIO"/>

<uses-permission android:name="android.permission.INTERNET"/>

Set minSdk to at least 24 in android/app/build.gradle:

android {

defaultConfig {

minSdk = 24

}

}

iOS — add to ios/Runner/Info.plist:

<key>NSMicrophoneUsageDescription</key>

<string>This app needs microphone access for speech-to-text.</string>

Usage

Basic Example

import 'package:groq_whisper_stt/groq_whisper_stt.dart';

final stt = GroqWhisperStt(

apiKey: 'your-groq-api-key',

config: const SttConfig(

model: WhisperModel.largev3Turbo,

language: 'en',

),

);

// Initialize

await stt.initialize();

// Listen for transcription results

stt.transcriptionStream.listen((result) {

print(result.text); // This chunk's text

print(result.sessionText); // Full session transcript

print(result.isFinal); // True when speech segment ends

});

// Listen for state changes

stt.stateStream.listen((state) {

// idle -> listening -> recording -> processing -> listening

print(state);

});

// Listen for errors

stt.errorStream.listen((error) {

print(error.message);

});

// Start / stop

await stt.start();

await stt.stop();

// Clean up

stt.dispose();

Configuration

const config = SttConfig(

model: WhisperModel.largev3Turbo, // or WhisperModel.largev3

language: 'en', // ISO-639-1, null for auto-detect

chunkDuration: Duration(seconds: 3), // How often to send audio during speech

silenceTimeout: Duration(milliseconds: 800), // Silence before finalizing

minSpeechDuration: Duration(milliseconds: 250), // Minimum speech to trigger

enableWordTimestamps: true, // Get word-level timing

prompt: 'Technical discussion', // Context hint for Whisper

temperature: 0.0, // 0.0 = deterministic

maxRetries: 3, // Retry on transient errors

retryDelay: Duration(milliseconds: 500), // Base retry delay

);

Models

| Model | ID | Description |

|---|---|---|

WhisperModel.largev3 |

whisper-large-v3 |

Max accuracy, multilingual (1550M params) |

WhisperModel.largev3Turbo |

whisper-large-v3-turbo |

Faster inference, slightly lower accuracy (809M params) |

Transcription Result

Each SttResult on the stream contains:

| Field | Type | Description |

|---|---|---|

text |

String |

Transcribed text for this chunk |

sessionText |

String |

Cumulative text for the entire session |

isFinal |

bool |

true when a speech segment ends |

words |

List<WordTimestamp>? |

Word-level timestamps (if enabled) |

detectedLanguage |

String? |

Language detected by Whisper |

audioDuration |

Duration |

Duration of the audio chunk |

avgLogProb |

double? |

Average log probability (confidence proxy) |

noSpeechProb |

double? |

Probability that the chunk contains no speech |

State Machine

idle -> initializing -> listening -> recording -> processing -> listening

^ |

|_______________________________________|

- idle — not started

- initializing — warming up

- listening — mic open, waiting for speech

- recording — speech detected, buffering audio

- processing — sending audio to Groq API

- paused — paused by user (call

resume()to continue) - error — error occurred

Testing

The constructor accepts injectable AudioRecorder and GroqApiClient for unit testing:

final stt = GroqWhisperStt(

apiKey: 'test',

recorder: MockAudioRecorder(),

apiClient: MockGroqApiClient(),

);

How It Works

Microphone -> VAD -> Audio Buffer -> WAV Encode -> Groq API -> Result Assembly -> Stream

| |

Ring Buffer 500ms overlap

(300ms pre-speech) (avoids cut words)

- Microphone captures 16kHz mono 16-bit PCM audio in 20ms frames

- VAD detects speech using energy-based analysis with an adaptive noise floor

- Ring buffer retains 300ms of pre-speech audio so utterance beginnings aren't clipped

- Audio chunks are sent to Groq every

chunkDuration(default 3s) during continuous speech, with 500ms overlap - Result assembler deduplicates overlapping words at chunk boundaries and chains prompt context

- Chunks with

noSpeechProb > 0.9are silently dropped

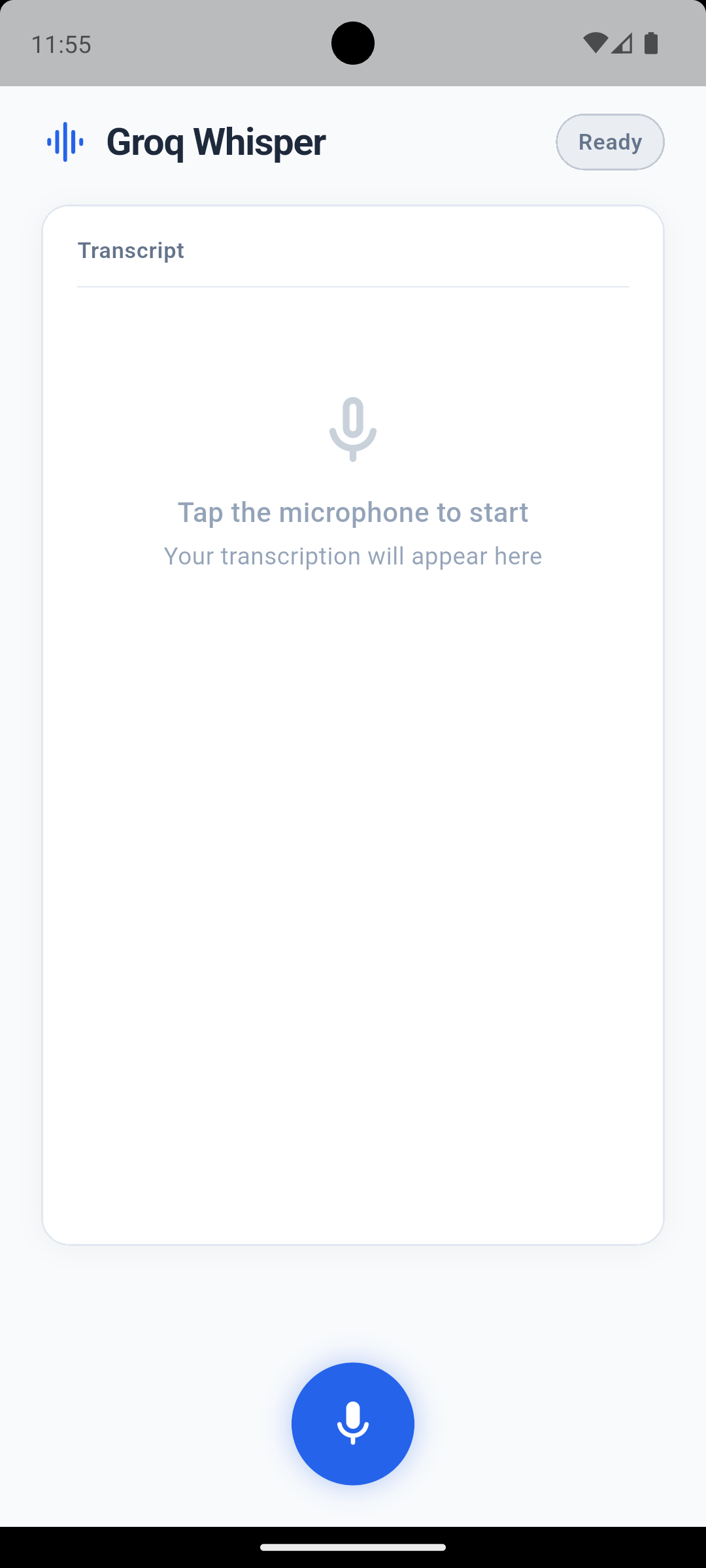

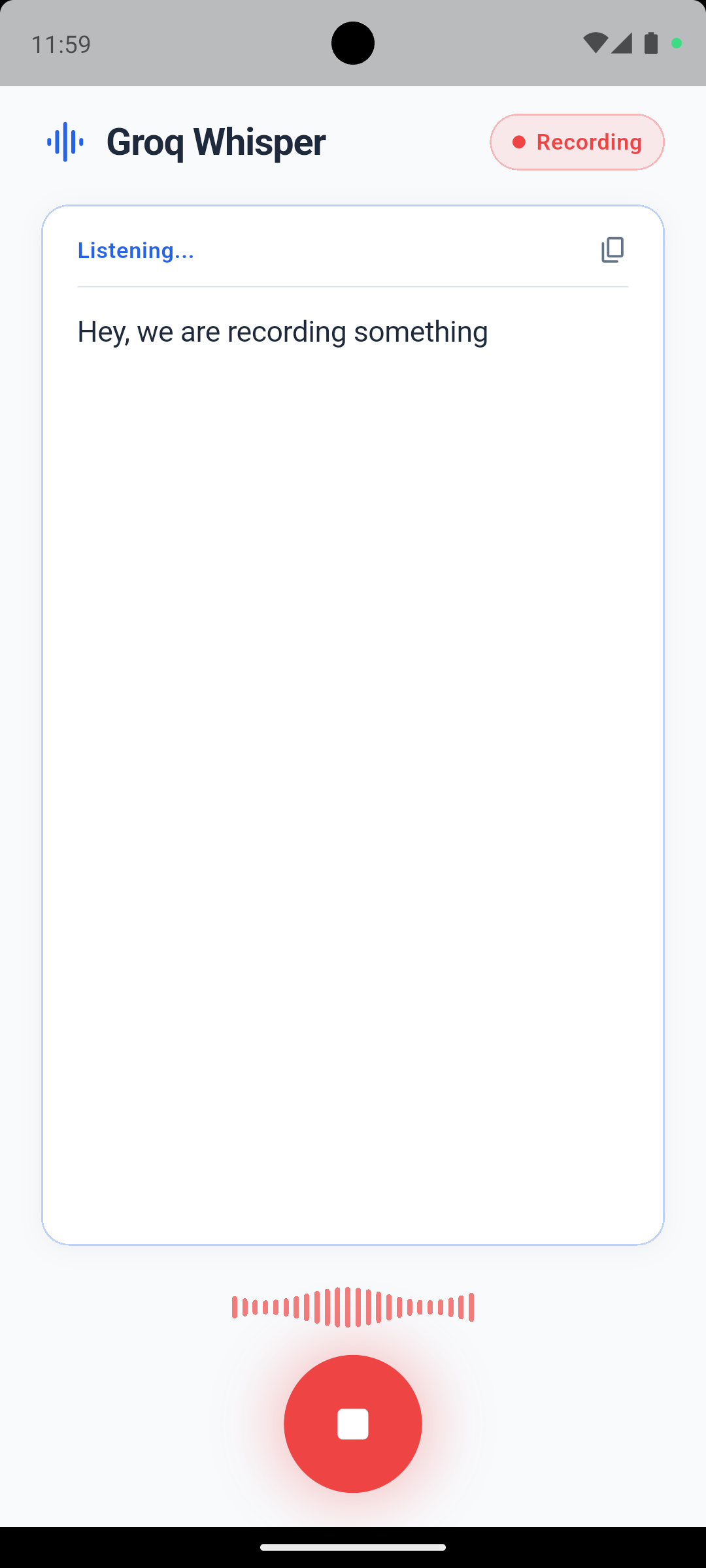

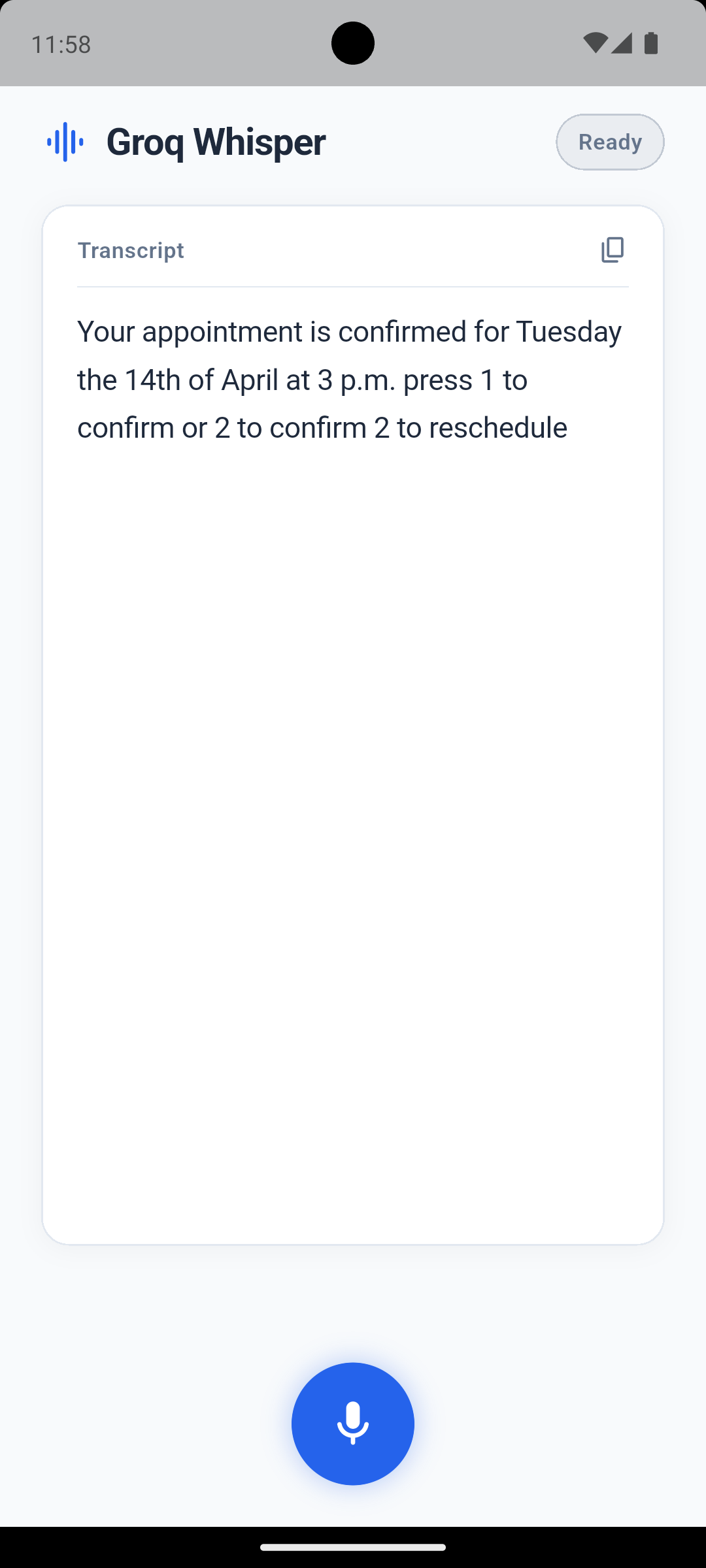

Example App

See the example directory for a complete demo app. Run it with:

cd example

flutter run --dart-define=GROQ_API_KEY=gsk_your_key_here

License

MIT License. See LICENSE for details.

Libraries

- groq_whisper_stt

- Real-time speech-to-text for Flutter using Groq's Whisper API.